There is a specific moment that keeps repeating itself in enterprise KM conversations right now.

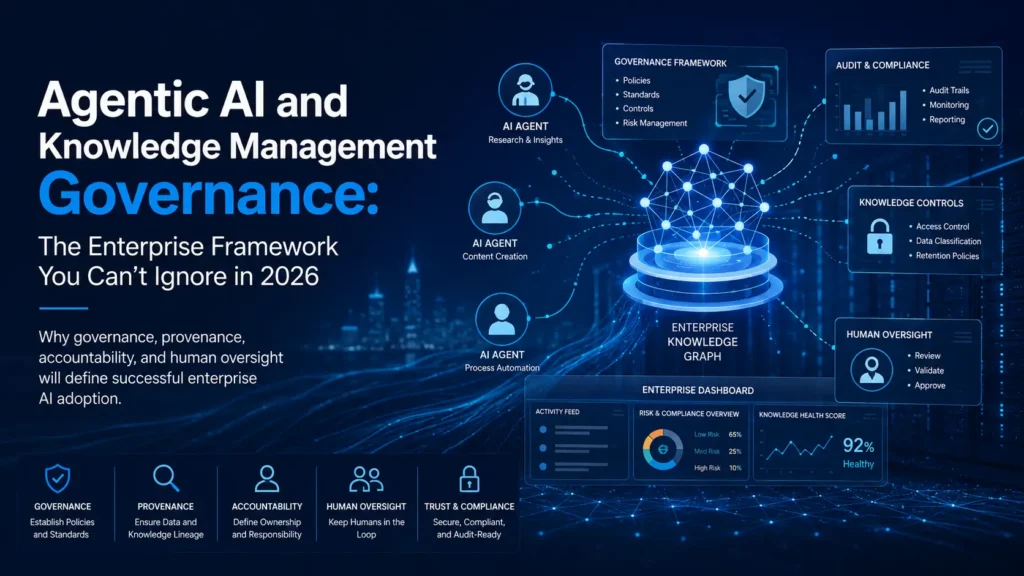

A technology leader usually someone from IT or a newly appointed Head of AI walks into a meeting and announces that the organization is deploying AI agents across its workflows. The agents will search the knowledge base, summarize documents, answer employee queries, and in some configurations, update records and tag new content automatically.

The KM professional in the room nods along. They have been fielding these conversations for months. But somewhere around the third slide, they start asking questions nobody in the room has answers to: Who reviews what the agent writes into the knowledge base? What happens when two agents contradict each other? If the agent surfaces a three-year-old policy that was never formally retired, and a decision gets made on it, who is accountable?

The silence that follows those questions is where this article begins.

The Shift That Changes Everything

For about thirty years, knowledge management operated on a fundamentally passive model. Humans created knowledge. Systems stored and indexed it. Other humans searched for and retrieved it. Governance in that world was about quality at the point of creation editorial standards, taxonomy rules, approval workflows, retention schedules.

That model assumed a simple truth: knowledge did not move unless a person moved it.

Agentic AI destroys that assumption.

In 2026, AI agents are not just retrieval tools. They are participants in the knowledge lifecycle. They ingest content, synthesize it, generate new artifacts from it, and in the most advanced implementations, write back into the systems that every employee in the organization depends on. They do this autonomously, continuously, and often invisibly.

The most sophisticated enterprises are no longer asking ‘Where did we put that document?’ they are asking ‘What does our organization know, and how do we let our AI agents act on it in real time?

That is a profound shift. And it demands a governance response that most KM functions have not yet built.

This article is the framework for building it.

What “Agentic AI” Actually Means in a KM Context

Before we go further, let’s be precise about what we mean, because the word ‘agentic’ has become so overloaded in vendor conversations that it has nearly lost meaning.

An AI agent, in the context of knowledge management, is a system that can:

- Perceive — read from structured and unstructured knowledge sources

- Reason — interpret, synthesize, and draw conclusions across those sources

- Act — take actions: generate content, update records, trigger workflows, notify people

- Iterate — use the results of its actions to inform next steps

What separates a true agent from a sophisticated search tool or a chatbot is the third and fourth capabilities. The moment your AI system can act on your knowledge base and learn from the results of that action, you have crossed into agentic territory.

Agentic AI will evolve through distinct phases, from assistance, where AI supports discrete tasks, to augmentation, where it manages multi-step processes within defined domains, to autonomy, where it operates across domains and makes smarter decisions guided by high-level business objectives.

Most enterprise deployments in 2026 sit somewhere between assistance and augmentation. The organizations that are already stress-testing autonomous operation are the ones discovering, painfully, why governance matters.

Here is what “acting on the knowledge base” looks like in practice, so we are talking about the same things:

- An agent that reads a cluster of technical support tickets and automatically drafts a new knowledge article based on patterns it identifies

- An agent that detects a policy document has not been accessed or reviewed in 18 months and flags it, or in a more autonomous configuration, archives it

- An agent that cross-references a customer query against multiple internal documents and synthesizes a response that gets logged as an official answer

- Multi-agent systems where a Researcher agent, a Synthesizer agent, and a Validator agent each handle distinct steps in a knowledge production workflow, passing outputs between themselves without human handoff

Each of these scenarios carries governance implications that your current KM framework was never designed to handle.

Why Governance Is the Gap Nobody Planned For

Here is the uncomfortable truth: most enterprise AI initiatives that have stalled or failed in the past two years did not fail because of the technology. They failed because the organizational infrastructure underneath the technology, the knowledge, the governance, the ownership, was not ready.

According to MIT’s GenAI Divide study, 95% of enterprise pilots showed no measurable ROI, not because of model quality, but because of workflow, context, and knowledge gaps.

That data point deserves more attention than it typically gets. The models work. The infrastructure to govern what they do with your organizational knowledge does not yet exist in most enterprises.

Deploying AI is often easier than building the structure, governance, and ownership needed to trust it at scale.

Governance in the context of agentic AI is not about restricting what AI can do. It is about creating the conditions under which AI actions are trustworthy, traceable, correctable, and accountable. That distinction matters enormously for how KM professionals should position this work internally. You are not the department of “no.” You are the function that makes trusted intelligence at scale possible.

The Five Governance Gaps That Will Break Your Agentic KM System

Before we get to the framework, let’s name the specific problems. These are not hypothetical. They are happening in organizations that deployed agentic systems without adequate KM governance architecture.

Gap 1: The Write-Back Problem

Traditional KM governance controlled the front door, who could create and publish knowledge. Agentic AI creates a side door that most governance frameworks cannot see.

When an agent generates a knowledge artifact and writes it into a repository, several things happen simultaneously that your current governance model does not account for:

Authorship is ambiguous. The agent produced it, but a prompt authored by a human triggered it. Who owns the content? Who validates it?

Review workflows are bypassed. Most approval processes assume a human submitted the content. Agent-generated content often routes around these workflows entirely.

Volume overwhelms human review capacity. A single agent can generate hundreds of knowledge artifacts in a day. The governance assumption that a human will review each one before it becomes “official knowledge” does not survive contact with agentic velocity.

The organizations handling this well have implemented what some practitioners are calling a knowledge staging environment — a buffer layer where agent-generated content exists in a “pending” state, is tagged explicitly as AI-generated, and must meet a threshold of validation criteria before it becomes part of the trusted knowledge base. The specific threshold depends on the risk classification of the knowledge domain.

Gap 2: The Provenance Collapse

Provenance, knowing where knowledge came from, who created it, and what it was based on, has always been a KM concern. Agentic AI makes it structurally harder in ways that matter legally and operationally.

When an agent synthesizes a response across twelve internal documents, three external sources, and the organization’s historical decision logs, the resulting artifact does not carry a clear intellectual lineage. It carries a citation list at best. The reasoning process, which documents were weighted, which were discarded, why one source was trusted over another, is invisible.

Real-world RAG deployment has revealed a critical gap: the inability to explain answers to auditors. In a regulated industry, this is not an inconvenience. It is a liability. When a compliance officer asks “on what basis was this knowledge claim made,” the answer cannot be “the agent decided.”

The governance response here is an audit-grade provenance layer — infrastructure that logs not just what the agent produced, but the full retrieval and reasoning chain behind it. This is technically complex, but the KM function’s role is to define the requirement, not build the technology.

Gap 3: The Accountability Vacuum

Traditional accountability in KM was clear, if imperfect. A subject matter expert authored content. A knowledge manager reviewed it. A business owner approved it. When something went wrong, you could trace the lineage.

Agentic AI introduces actors into the knowledge lifecycle who cannot be held accountable in the traditional sense. You cannot summon an AI agent to a post-incident review. You cannot ask it why it made a particular decision. You can only examine its outputs and, if you built the logging infrastructure, its inputs.

Unlike traditional software that executes predefined logic, agents make runtime decisions, access sensitive data, and take actions with real business consequences.

The governance question is not “how do we make the agent accountable” — that is the wrong question. The governance question is: at every point where an agent takes a meaningful action on organizational knowledge, which human is accountable for that action?

That question must be answered before deployment, not after an incident. Role clarity — who owns agent configuration, who approves agent access to specific knowledge domains, who reviews agent outputs, who can override or roll back agent actions — must be formalized and documented.

Gap 4: Autonomy Creep

This is the governance gap that tends to emerge slowly and is hardest to catch.

Organizations typically start an agentic deployment conservatively. The agent has read-only access. It summarizes. It recommends. It does not act. But over time, sometimes deliberately, sometimes through incremental feature additions by well-intentioned technology teams, the agent’s permissions expand. It gets write access to tag content. Then to archive content. Then to create content. Then to update records.

Each step seemed reasonable in isolation. The cumulative effect is an agent operating at a scope of autonomy that was never explicitly authorized by the people responsible for knowledge governance.

Leading organizations are implementing “bounded autonomy” architectures with clear operational limits, escalation paths to humans for high-stakes decisions, and comprehensive audit trails of agent actions.

Governance must define, and enforce, explicit autonomy boundaries for each agent role, with a formal change management process required to expand them. This is not a technical safeguard alone. It is an organizational policy.

Gap 5: The Multi-Agent Blindspot

Single-agent governance is relatively tractable. Multi-agent governance is genuinely hard and almost nobody has solved it yet.

When you have a Researcher agent, a Synthesizer agent, and a Validator agent operating as a chain, several new governance problems emerge:

Intermediate outputs are not governed. The content that passes between agents in a workflow often has no review step and no audit trail. It is ephemeral by design. But it shapes the final output.

Error propagation is silent. If the Researcher agent retrieves a stale document and the Synthesizer agent builds on it without flagging the issue, the Validator agent may approve an artifact with flawed foundations, and your governance system never saw the problem.

Agent identity is not standardized. When multiple agents touch a knowledge artifact, attributing the action to a specific agent, and therefore to the human accountable for that agent, becomes genuinely complex.

Multi-agent governance requires logging at the workflow level, not just the artifact level. Every agent interaction, every intermediate handoff, every decision point in the chain must be captured and attributable.

The Enterprise Governance Framework

What follows is a practical architecture built from what is actually working in organizations that have deployed agentic KM systems at enterprise scale. It has five layers, each of which must be addressed. You cannot skip to Layer 4 without completing Layers 1 and 2 first.

Layer 1: Knowledge Asset Classification

You cannot govern what you have not classified.

Before any agent touches your knowledge base, every content type in your ecosystem needs a risk-tiered classification. This classification determines what level of agent autonomy is permitted for each content category.

Tier 1 — Authoritative Knowledge Policies, compliance documentation, legal frameworks, regulated procedures. Agent access: read-only. No agent may create, modify, archive, or summarize Tier 1 knowledge for official use without human review and approval. Violation of this boundary should trigger an automatic alert.

Tier 2 — Operational Knowledge Process documentation, technical guides, project records, meeting outputs. Agent access: read and draft-create. Agents may generate new Tier 2 content, but it enters a staging environment and requires human review before promotion to active status.

Tier 3 — Contextual and Tacit Knowledge Informal expertise, conversation captures, community content, discussion threads. Agent access: read, synthesize, and in defined contexts, create. This is the tier where most agentic automation begins and where the volume makes human review of every artifact impractical. Governance here focuses on sampling, anomaly detection, and periodic audits rather than individual review.

Tier 4 — Transient Knowledge Working documents, drafts, interim analysis, agent-to-agent communication. Agent access: full. This tier is explicitly not part of the trusted knowledge base. Governance focuses on preventing Tier 4 artifacts from being mistakenly treated as authoritative.

The classification work itself is KM work. It requires domain expertise, stakeholder consultation, and ongoing maintenance as new content types emerge. This is a core contribution KM professionals make to the governance architecture — and it is one that cannot be outsourced to technology teams.

Layer 2: Agent Permission Architecture

Once knowledge assets are classified, the next governance layer defines what each agent role is permitted to do with each tier.

Think of this as a permission matrix, agent roles across one axis, knowledge tiers across the other, and permitted actions (read, draft, create, modify, archive, promote) in each cell.

This matrix should be:

- Formally documented and version-controlled, not maintained informally in someone’s head

- Owned by a named individual — typically the Chief Knowledge Officer or equivalent, with IT as the implementing partner

- Reviewed on a defined cadence — every time a new agent is deployed, every time an existing agent’s capabilities change, and at minimum quarterly as organizational knowledge strategy evolves

The permission matrix also needs to capture escalation conditions — the specific circumstances under which an agent must pause and route to a human rather than proceeding autonomously. The definition of those conditions is a governance decision, not a technical one.

A Validator agent reviewing a synthesized document before it enters the knowledge base is a human-in-the-loop design by default. A Researcher agent that encounters a document classified as Tier 1 but never formally updated after a regulatory change — what should it do? Stop and escalate? Proceed and flag? The answer must be pre-specified, documented, and technically implemented.

Layer 3: Provenance and Audit Infrastructure

Every meaningful agent action on the knowledge base must be logged in a way that is:

- Complete — what action was taken, by which agent, at what time, triggered by what input

- Traceable — for synthesis actions, which source documents were used, weighted, or discarded

- Queryable — compliance officers, knowledge managers, and auditors can retrieve the full history of any knowledge artifact on demand

- Tamper-evident — the audit log itself is protected from modification, including by other agents

This is primarily a technology architecture requirement, but it is driven by a governance specification. KM professionals need to define what the audit log must capture — not how to build it, but what questions it must be able to answer.

The questions that matter most in an enterprise context:

- On what basis was this knowledge claim made?

- Which version of source documents was used at the time this artifact was created?

- Who configured the agent that produced this output?

- Has this artifact been modified since it was first created, and by whom or what?

- What human reviewed and approved this artifact before it became active knowledge?

The EU AI Act and emerging U.S. state-level regulations demand transparency, explainability, and ethical AI practices — and those demands are increasingly landing on the knowledge governance function. Building provenance infrastructure now is not overhead. It is compliance preparation.

Layer 4: Human Oversight Design

Human-in-the-loop is frequently misunderstood as a concession, an admission that AI is not reliable enough to operate without a human safety net. That framing is both wrong and strategically counterproductive.

Human oversight in agentic KM governance is a design choice about where human judgment creates the most value. The goal is not maximum human involvement. It is human involvement at exactly the right points.

Here is a practical model for where human oversight should be anchored:

At agent configuration. The decisions about what an agent can access, what it can do, and under what conditions it must escalate, these are human decisions that set the governance parameters everything else depends on. This is where the most consequential human judgment is applied.

At tier boundary crossings. When an agent generates content that may be promoted from Tier 3 to Tier 2, or from Tier 2 to Tier 1, a human review gate must exist. The crossing of a tier boundary changes the knowledge’s authority level and therefore the organizational risk it carries.

At anomaly flags. Rather than reviewing every agent output, which is neither feasible nor the right use of human expertise, governance systems should be configured to surface anomalies: agent outputs that are statistically unusual, that contradict existing Tier 1 knowledge, that involve source documents not accessed in an unusually long period, or that cover topics with high regulatory sensitivity.

At defined intervals for active agent roles. “Any agent with Tier 1 or Tier 2 write access should be subject to periodic human performance review, not just the outputs it created, but the decisions it made, including content it chose not to create or actions it chose to escalate.

AI increases access to insights, but people ensure quality, relevance, and trust. Making sources clear, outputs explainable, and ownership explicit is a core governance design principle.

Layer 5: Continuous Governance and Self-Monitoring

Static governance frameworks do not survive contact with agentic systems. The systems evolve. Organizational knowledge evolves. Regulatory requirements evolve. The governance framework must evolve with them.

The most mature organizations in this space are deploying governance agents — dedicated AI agents whose specific function is to monitor other agents’ knowledge management behaviors, flag policy violations, track anomaly patterns, and surface governance gaps for human review. More sophisticated implementations include security agents that detect anomalous agent behavior across the full knowledge ecosystem.

Beyond governance agents, continuous governance requires:

Knowledge health metrics that include agentic contributions. Your KM dashboard should show not just knowledge creation rates, but agent creation rates vs. human creation rates, agent-generated content accuracy rates over time, and the rate at which agent-generated content is being corrected or retracted after promotion.

Regular governance audits, not just compliance audits. A compliance audit asks “did we follow the rules?” A governance audit asks “are the rules still the right rules?” In a fast-moving agentic environment, rules that made sense six months ago may be Creating friction without providing proportionate protection, or, more dangerously, having gaps that were not visible when they were written.

An escalation culture, not just an escalation process. The most technically sophisticated governance architecture fails if the people operating within it are not confident in raising concerns. KM professionals have a cultural leadership role here, normalizing the reporting of governance edge cases, unexpected agent behaviors, and situations where the framework did not provide clear guidance.

Where to Start: A Maturity-Based Entry Point

This framework is comprehensive by design. But “comprehensive” is not the same as “start everywhere simultaneously.”

Only about one-third of organizations report maturity levels of three or higher in strategy, governance, and agentic AI governance. Organizational alignment and oversight structures are struggling to keep pace with the rapid expansion of AI deployment.

Your entry point depends on where your organization is today.

If you have agentic systems already deployed without a governance framework: Start with an emergency classification exercise for your highest-risk knowledge domains (Tier 1 and Tier 2). Simultaneously, get a read-only audit of what your agents have already done — what they have created, modified, or surfaced. You may find the situation is manageable. You may find it is not. Either way, you need to know before the next regulatory examination or the next incident.

If you are in procurement or pre-deployment planning: Your leverage is at Layer 2 — the permission architecture. This is the point at which governance requirements can be built into agent configuration rather than retrofitted. Insist that agent permission architecture is a governance-led conversation, not a technology-led one.

If you are at early maturity with basic governance in place: Focus your energy on Layer 3 (provenance infrastructure) and Layer 5 (continuous governance). These are the layers where most organizations have the largest gaps and where the regulatory exposure is growing fastest.

If you have a reasonably mature framework: You are likely underinvested in multi-agent governance and governance agents. These are the frontiers where the next wave of risk is emerging and where the organizations investing now will be two years ahead of the field.

The KM Professional’s Role That Cannot Be Automated

I want to address something directly, because it is present at every KM conference and in the background of every conversation about agentic AI.

Some KM professionals are worried that agentic AI will make their function redundant. That if AI can capture, organize, synthesize, and surface knowledge automatically, the human role in knowledge management disappears.

That worry, while understandable, is misdirected.

What agentic AI automates is the mechanical execution of knowledge management processes. What it cannot provide, and what becomes more valuable as systems become more autonomous, is the judgment layer: knowing which knowledge matters, understanding why an organizational context makes one piece of knowledge more authoritative than another, sensing when a synthesized output is technically accurate but organizationally wrong, and deciding how governance frameworks should evolve as conditions change.

KM professionals should lean into these capabilities, offering their expertise to guide how to craft the dialogues, pinpoint expertise, and validate outputs, while letting the automation handle the execution and volume work.

The governance framework described in this article is almost entirely a human construct. Agents can help monitor it. They cannot design it, own it, or bear responsibility for it. That is the KM function’s work, and it is work that requires the kind of organizational understanding, domain expertise, and professional judgment that only comes from years of working inside these systems.

In the agentic era, the KM professional is not less necessary. They are the person who decides what the agents are allowed to know and what they are allowed to do with it. That is not a peripheral role. It is the architecture of organizational intelligence.

What 2027 Will Punish You for Not Doing in 2026

I will close with a candid forecast, because the organizations that will face the most serious problems in the next 18-24 months are largely predictable.

Organizations that deployed agentic systems without Layer 1 classification will discover that agents have been treating all knowledge equally — surfacing archived, outdated, or conditionally valid knowledge as authoritative. The remediation will be painful and expensive, and it will be happening while competitors who did this work earlier are accelerating past them.

Organizations without provenance infrastructure will face regulatory examination under the EU AI Act and equivalent frameworks, unable to demonstrate the basis on which AI-mediated knowledge decisions were made. This is not a theoretical risk. It is a near-term compliance reality that is already landing on legal and compliance teams.

Organizations that treated governance as a technology team responsibility Will find that the governance framework was built to accommodate the technology rather than to protect the organization. When something goes wrong, and at scale, something will go wrong, there will be no clear human accountable, no audit trail, and no remediation path.

Organizations that built governance and communicated it clearly will have something invaluable: the organizational confidence to deploy agents in increasingly high-value scenarios, because the trust infrastructure is in place to support it.

The shift happening in 2026 is from viewing governance as compliance overhead to recognizing it as an enabler. Mature governance frameworks increase organizational confidence to deploy agents in higher-value scenarios, creating a virtuous cycle of trust and capability expansion.

That virtuous cycle is what we are building toward. The framework in this article is the foundation.

The organizations that will lead in knowledge intelligence over the next decade are not the ones that deployed the most sophisticated agents. They are the ones that governed those agents well enough to trust them with what matters most, the accumulated knowledge of their people, their decisions, and their institutional memory.

That work starts now. And it starts with the knowledge management function choosing to lead it.

The Five-Layer Framework: At a Glance

| Layer | What It Governs | Who Owns It |

|---|---|---|

| 1. Knowledge Asset Classification | Risk tier for each content type; what agent access is permitted | KM function, with domain business owners |

| 2. Agent Permission Architecture | What each agent role can do with each knowledge tier; escalation conditions | CKO / KM function, implemented by IT |

| 3. Provenance & Audit Infrastructure | Complete, queryable, tamper-evident logs of all agent actions | IT architecture, governed by KM / Legal / Compliance |

| 4. Human Oversight Design | Where humans enter the workflow and why; review gates and anomaly flags | KM function with business process owners |

| 5. Continuous Governance | How the framework monitors itself and evolves over time | KM function, supported by governance agents |

This article is the first in a series on Agentic AI and Knowledge Management Governance. Subsequent articles explore bounded autonomy architecture, knowledge provenance as a legal requirement, human-in-the-loop design patterns, and the multi-agent governance challenge in depth.

Stay informed on upcoming Smritex Knowledge Management sessions and workshops. Subscribe for updates.